You're Prompting AI Wrong. Here's the Data-Backed Way to Do It Right.

Key insights for managers and aspiring AI Operators from a 79-page academic review of 1,565 research papers.

Hey Friends 👋 Happy Tuesday

Here’s another weekly dose of AI ways of working.

You spend hours wrestling with an AI, trying to get the right output. You tweak a word here, add ‘please’ there, and hit ‘generate’ like you’re pulling a slot machine lever. Sometimes you win, but mostly you get generic, unusable nonsense. It feels like a guessing game you can’t win.

What if there was a rulebook for the slot machine? A systematic, evidence-based guide that turns prompting from a dark art into a reliable science. There is. A comprehensive academic paper just synthesised 1,565 different research papers into a 79-page definitive guide on prompt engineering. It’s dense, but it provides a clear framework for getting consistent, high-quality results from any LLM.

Today, I’m breaking down the most actionable insights for operators, COOs, and PMs. You’ll learn the core techniques that separate amateur prompting from professional-grade AI interaction. And yes, you can download the full academic paper for free at the end.

A structured framework for systematic AI interaction—moving from chaos to predictable outcomes.

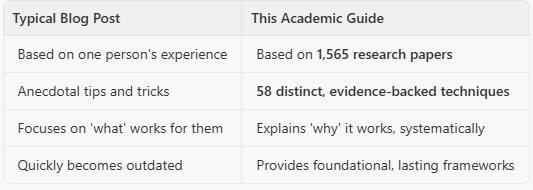

Why This Guide (Not Just Another Blog Post)

Most advice on prompting is based on one person’s trial and error. It’s anecdotal and often outdated within weeks. This is different.

This guide is the output of a systematic review of virtually all academic literature on prompting. It’s not one person’s opinion; it’s a synthesis of the entire field of research.

That’s why this is a game-changer. It gives you a reliable system, not just a list of tricks.

What You’ll Learn

We’ll translate the academic jargon into four powerful, actionable techniques you can use immediately to improve your AI outputs. You’ll learn how to stop guessing and start engineering your prompts for predictable success.

Time to read this post: 7 minutes

Time saved per week: 2-3 hours of re-writing and correcting AI outputs

The Core Four: Actionable Prompting Frameworks

Here are the four most critical techniques from the research that you can apply right away.

1. Teach with Examples: In-Context Learning (ICL)

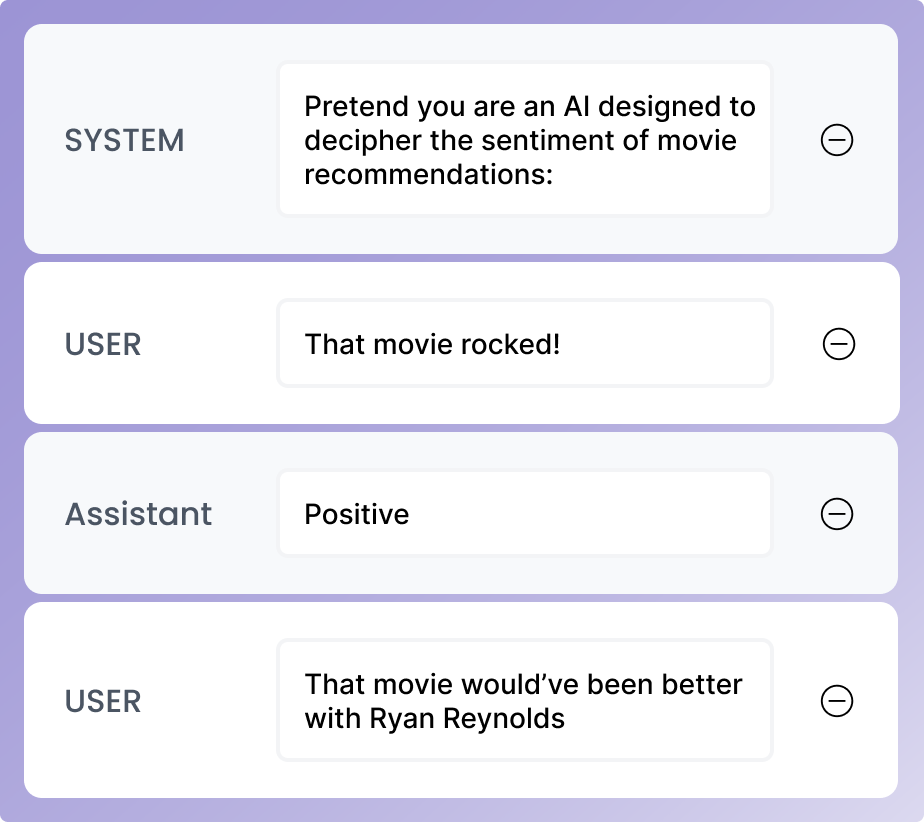

Instead of just telling the AI what to do, show it. ICL means providing a few examples of the task directly in your prompt. The research highlights six factors that determine success.

The research identifies six critical design decisions that transform few-shot prompting from random to reliable. Quantity matters—performance improves with more examples, particularly up to 20 for standard models and beyond for long-context models. Ordering is surprisingly critical; the sequence of your examples can shift accuracy from 50% to over 90%. Format consistency is essential—using the same structure (such as “Q: / A:”) across all examples improves performance. Similarity helps; examples that closely resemble your actual query tend to work best. Label quality is less critical than you might think—for large models, the structure of examples matters more than their correctness. Finally, label distribution should be balanced to avoid bias toward any single category.

Operator’s Action: When asking an AI to categorise customer feedback, don’t just ask. Show it first. Provide 2-3 examples for each category (Bug Report, Feature Request, Billing Issue) before the real data.

A prompt showing clear examples of a classification task before the final instruction—this is In-Context Learning in action.

2. Force the Reasoning: Chain-of-Thought (CoT)

For complex tasks, AI often rushes to an answer and makes mistakes. CoT forces it to slow down and ‘show its work’. The simplest way to do this is with a single phrase.

Operator’s Action: When you have a complex reasoning task (such as “Based on these three reports, what are the top 5 risks for Q3?”), simply add this phrase to the end of your prompt:

“Let’s think step by step.”

This simple addition, known as Zero-Shot CoT, is proven to dramatically improve the AI’s reasoning ability, leading to more accurate and logical conclusions. The research shows that this technique significantly enhances performance on mathematics and reasoning benchmarks without requiring any examples.

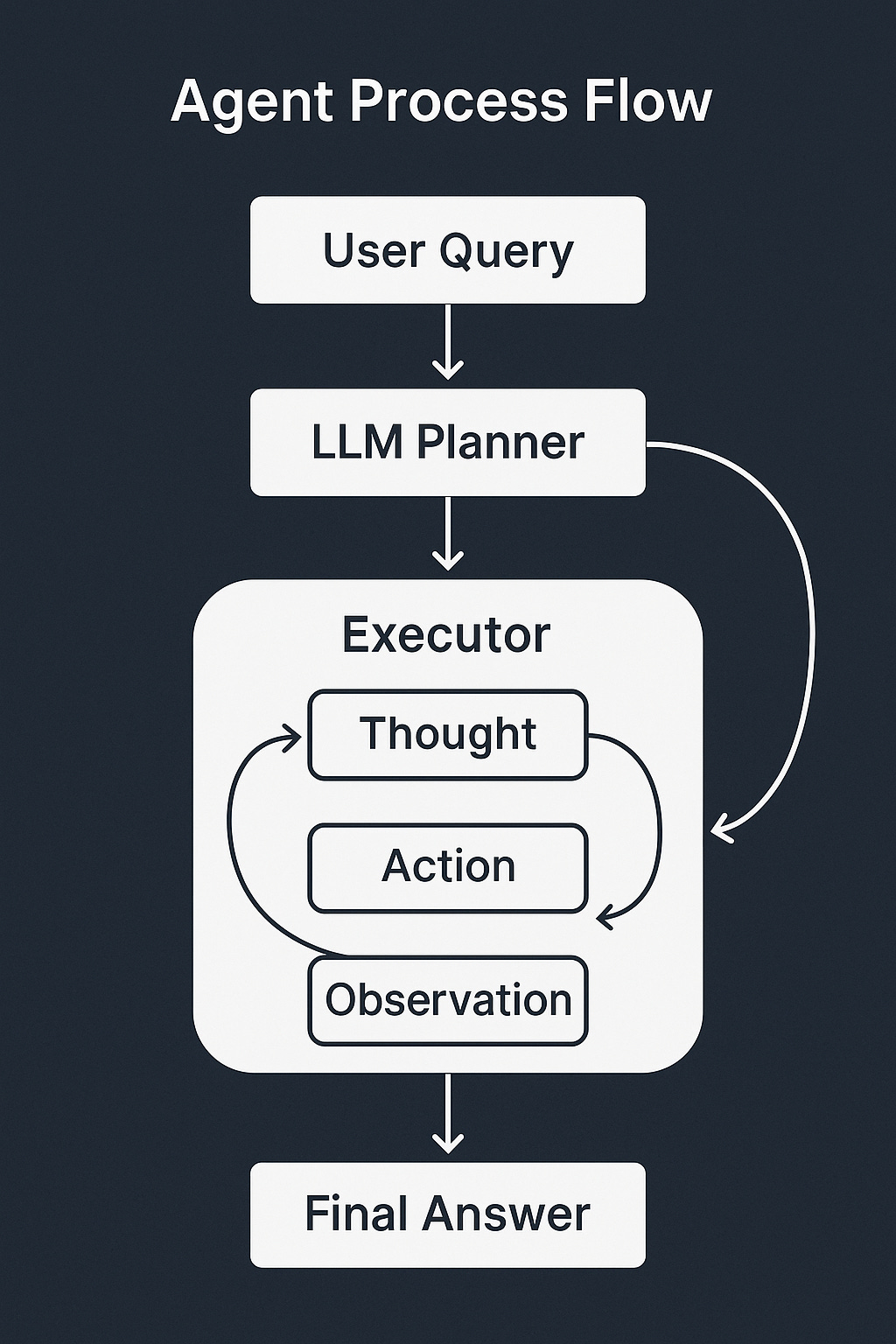

3. Break It Down: Decomposition

Complex projects aren’t tackled in one go, and neither should complex prompts. The research validates the ‘decomposition’ method, where you instruct the AI to break a large task into smaller, sequential sub-problems.

Operator’s Action: Instead of asking the AI to “Write a project plan for our new product launch,” guide it with decomposition.

“First, create a list of all the major phases for a product launch. Then, for each phase, list the key deliverables. Finally, estimate a timeline for each deliverable.”

This turns a single, overwhelming request into a structured process that the AI can execute far more reliably. The guide identifies several decomposition techniques, including Least-to-Most (starting with the simplest sub-problem and building up), Plan-and-Solve (planning the steps first, then executing each), and Tree-of-Thought (exploring multiple reasoning paths simultaneously).

A visual representation of task decomposition, breaking a complex query into planned, iterative sub-tasks with feedback loops.

4. Make It Check Itself: Self-Criticism

Even with good prompts, AIs can make errors. The most advanced technique is to build a verification step into your prompt itself. This is the principle of ‘self-criticism’.

Operator’s Action: For high-stakes outputs like a client proposal or a financial summary, ask the AI to verify its own work.

“Generate a summary of the attached financial report. After you generate the summary, review it step-by-step and verify that every number in the summary matches the numbers in the original report. List any discrepancies you find before providing the final, corrected summary.”

This forces a loop of generation and verification, catching errors before they get to you. The research identifies several self-criticism methods, including Chain-of-Verification (where the AI generates an answer, creates verification questions, answers them, and produces a final output), Self-Refine (an iterative feedback loop where the AI critiques and improves its work), and Self-Consistency (generating multiple answers and selecting the most common one).

What You’ve Built

By moving away from guessing and adopting these four frameworks, you’ve built a systematic process for interacting with AI. You’ve stopped being a user and started being an operator.

Before:

•Trial-and-error prompting: 15-20 minutes per task

•Inconsistent, often unusable results

•Manual re-work and correction: 30+ minutes

•Total: 45-60 minutes per complex task

After:

•Structured, framework-based prompting: 5 minutes

•Consistent, reliable, and predictable results

•Minor review and edits: 5-10 minutes

•Total: 10-15 minutes per complex task

Time Saved: Over 30 minutes per task, which adds up to hours every week.

Get the Full Guide

These four techniques are just the beginning. The full 79-page academic paper provides a deep dive into 58 distinct prompting methods, including security considerations (prompt hacking and hardening measures), benchmarking frameworks, multimodal prompting (images, audio, video), and advanced agent-based techniques.

The guide covers critical topics for operators, including how to evaluate prompting techniques systematically, how to protect against prompt injection attacks, and how to handle alignment issues like bias and overconfidence. It’s a comprehensive resource grounded in rigorous academic research, not marketing hype.

Download the full, free Prompt Engineering guide here →

What to Build Next

Once you’ve mastered these four core techniques, you can explore more advanced frameworks from the guide. Consider implementing Role Prompting to assign specific expertise to the AI (such as “You are an experienced COO”), which can improve domain-specific outputs. Experiment with Emotion Prompting by incorporating phrases of psychological relevance (such as “This is important to my career”) to potentially enhance performance on benchmarks and open-ended tasks. Explore Ensembling techniques like Self-Consistency, where you generate multiple answers and select the most common one for higher confidence. Finally, investigate Retrieval Augmented Generation (RAG) to connect your AI to external knowledge bases and real-time data sources.

What’s the most frustrating thing you’re trying to get an AI to do?

Andres

References & Additional Context

This post is based on a comprehensive systematic review of prompting techniques, conducted using the PRISMA methodology. The review analysed 1,565 academic papers from arXiv, Semantic Scholar, and ACL to identify 58 distinct text-based prompting techniques. The full paper includes detailed taxonomies, benchmarking results (including tests against the MMLU dataset), and a real-world case study on identifying signals of suicidal crisis in support text.

The guide is maintained as an evolving resource at LearnPrompting.org, with an up-to-date list of terms and techniques. It represents the first comprehensive attempt to standardise terminology and create a robust directory of prompting methods for both developers and researchers.

Key Research Highlights:

•Systematic Review: Machine-assisted review using GPT-4 with 89% precision and 75% recall

•Scope: Focuses on prefix prompts (not cloze prompts) and hard prompts (not soft/continuous prompts)

•Coverage: Text-based, multilingual, multimodal, and agent-based prompting techniques

•Practical Focus: Task-agnostic techniques that can be quickly understood and implemented

For operators, managers or COOs, the most relevant sections are Section 2.2 (Text-Based Techniques), Section 4.2 (Evaluation), Section 5.1 (Security), and Section 6 (Benchmarking). These sections provide immediately actionable frameworks for improving AI reliability in operational contexts.