The AI Agent Pattern Behind a $2B Acquisition

Forget prompting. The future is “Context Engineering”—and it turns your forgetful AI assistant into a reliable project partner.

Hey Friends 👋 Happy Thursday

Here’s another weekly dose of AI ways of working.

You’ve felt the frustration. You’re 20 messages deep into a complex task with an AI agent. You’ve fed it documents, clarified requirements, and iterated on the code. Then, it happens. The agent gets confused, the context window degrades, or you accidentally hit /clear.

Suddenly, the AI has total amnesia. All that progress is gone. It feels less like delegating and more like babysitting a brilliant but impossibly forgetful intern.

This isn’t a user error; it’s a system limitation. And overcoming it requires a new skill: context engineering. This is the emerging discipline of designing the information environment where an AI agent operates. It’s the secret to making them reliable, and it reflects the principles that are making AI agent companies like Manus AI worth billions.

The core idea is simple but profound: stop treating the chat window like a permanent brain and start using the filesystem as a persistent one.

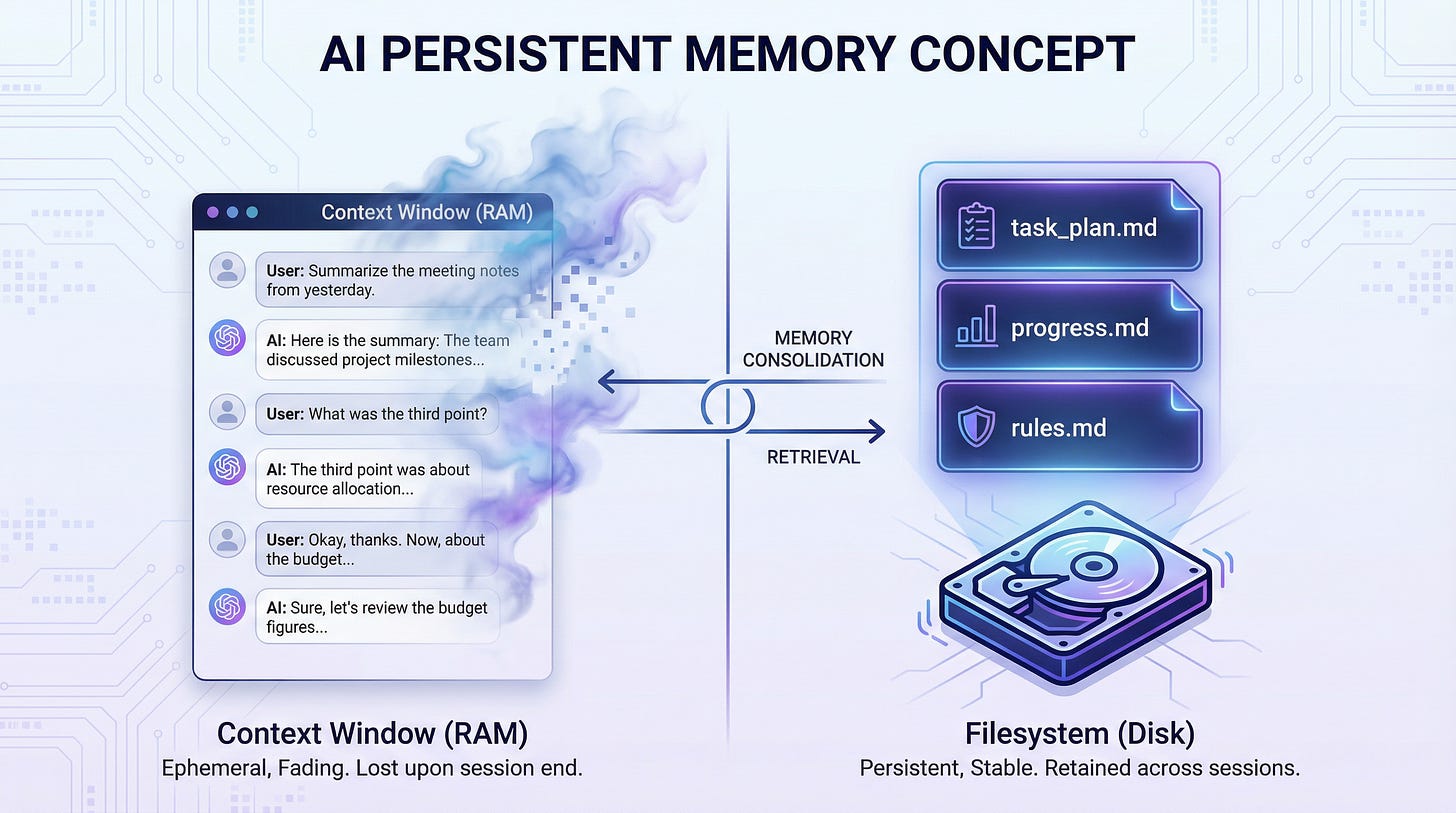

The core concept: A volatile Context Window (RAM) vs a persistent Filesystem (Disk)

Your AI’s Chat Window is RAM, Not a Hard Drive

The fundamental principle of context engineering is this:

Context Window = RAM (volatile), Filesystem = Disk (persistent). Anything important gets written to disk.

An AI agent’s chat conversation is like your computer’s RAM, incredibly fast but temporary. When the conversation gets too long or you clear the chat, that memory is wiped. Your project’s files, however, are like your hard drive. They persist.

Instead of trying to stuff everything into the agent’s short-term memory, you create a simple, external memory system using plain text files.

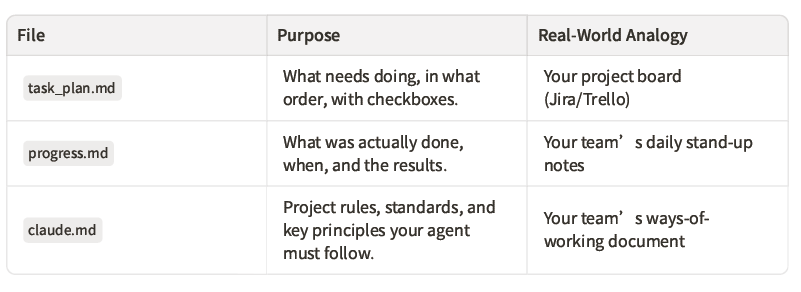

The Community’s Hack: A 3-File System for Perfect Recall

This pattern, gaining widespread adoption in the open-source AI community, relies on just three simple Markdown files that live in your project directory. For Claude Code users, the setup is immediate and practical:

This system acts as the AI’s external brain, giving it a perfect, persistent memory of your project.

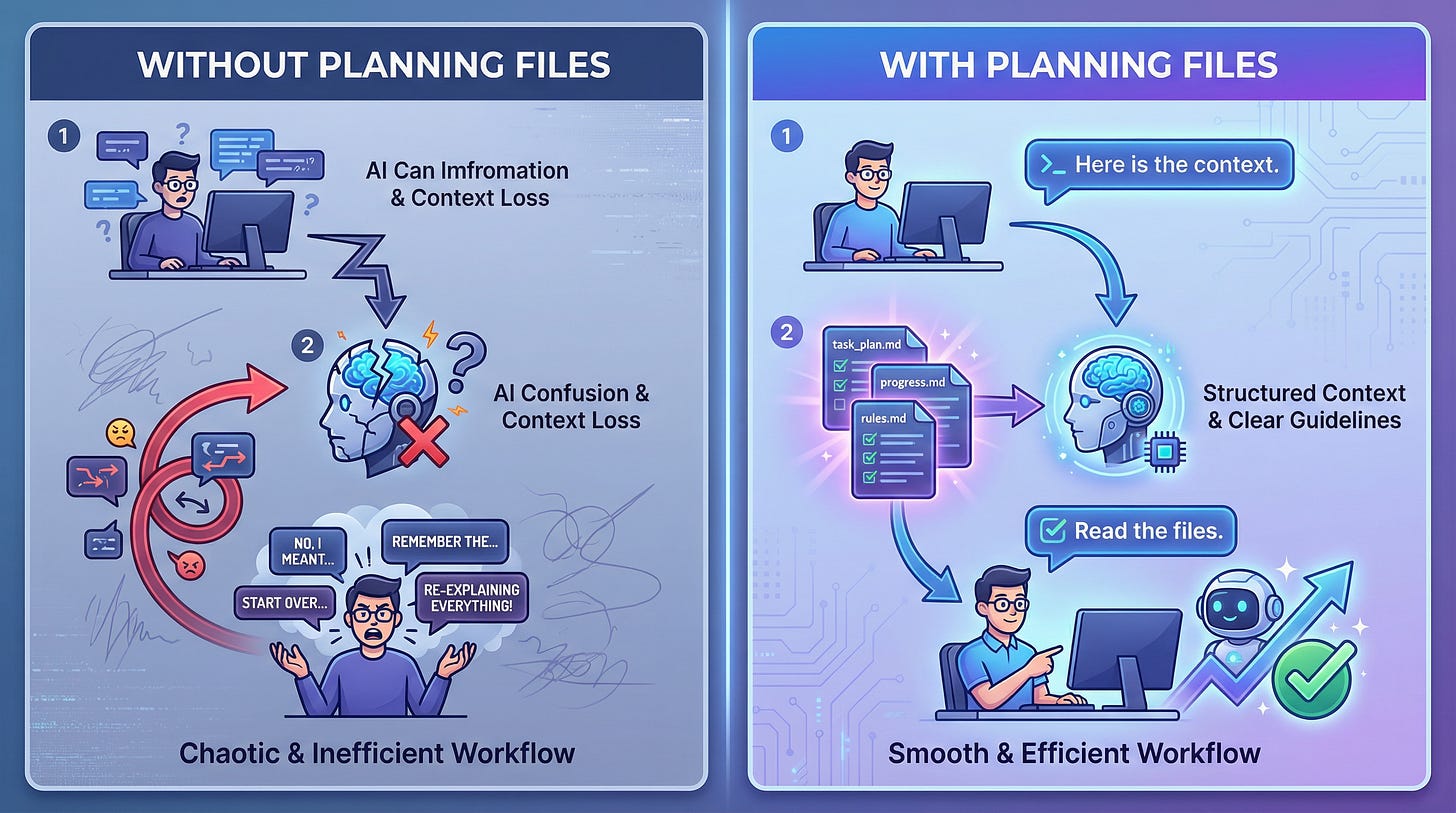

Why It Matters: The Recovery Scenario

The difference this makes is night and day. The recovery scenario shows the power of this approach better than any abstract explanation.

Without Planning Files:

You work with an agent for 25 messages. The context degrades, and the agent gets confused. You type /clear. The agent has zero memory of what you’ve built. You spend the next 20 minutes re-explaining everything, inevitably missing details.

With Planning Files:

You work with an agent for 25 messages, and it updates the files as it goes. The context degrades, and the agent gets confused. You type /clear. You give one simple instruction:

“Read the project files and continue where we left off.”

The agent reads the files and knows exactly what’s been done, what’s next, and the rules to follow. Zero progress is lost.

The dramatic difference between chaotic re-explanation and smooth recovery

This is the workflow: Read from disk at the start of a session. Write to disk at the end. Everything important lives on the filesystem, not in the conversation.

Why This Principle is Worth Billions

While Manus AI’s exact internal architecture isn’t public, its success points to a crucial truth: the ability to create reliable, autonomous agents that can execute complex, multi-step tasks is immensely valuable. Manus AI went from a $500 million valuation to a $2 billion acquisition by Meta in just eight months because it solved this problem at scale, achieving over $100 million in annual recurring revenue.

The value isn’t in a single tool, but in the underlying principle of persistence and reliability. The persistent planning pattern, now popular in the open-source community, is a direct and practical application of this billion-dollar principle.

This isn’t just a clever trick for developers. It’s a foundational pattern that makes autonomous AI agents actually work in production environments. And now, with tools like Claude Code becoming more accessible, this same pattern is available to operators, managers, and founders who want to build real applications without becoming software engineers.

From Prompter to Engineer

If you’re a project manager, operations lead, or founder, this pattern unlocks a new capability: the ability to delegate complex, multi-step work to AI agents with confidence. You already know how to break down projects, define requirements, and manage workflows. Those are the exact skills needed for context engineering.

The difference is that instead of managing human team members, you’re managing an AI agent’s information environment. You’re structuring the context it needs to succeed, defining the rules it should follow, and tracking the progress it makes—all using simple text files.

This is the future of operations work. The managers who learn to structure context effectively will be the ones who can build and automate at a scale that was previously impossible without large engineering teams.

Your Turn: Build Your First Persistent Agent

Ready to try it? The best way to understand the power of this pattern is to use it.

1.Create a new project folder.

2.Inside it, create three files: claude.md, task_plan.md, and progress.md.

3.Start your next Claude Code session by telling it to read those files.

A real example: CLAUDE.md defining project rules, task_plan.md tracking tasks, and progress.md logging what’s been done

Follow for more AI for operators.

Andres

References

[1] Meta Buys AI Startup Manus for More Than $2 Billion - WSJ

[2] Effective context engineering for AI agents - Anthropic

[3] Meta Acquires Manus AI for $2B - ALM Corp