How I ran an AI build on a real operations problem inside a regulated bank

A case study in building operational efficiency with AI inside a regulated bank, and what founders and operators can learn if they want to solve real workflow problems in their own businesses.

Hey Friends 👋 Happy Wednesday

Here’s another weekly dose of AI ways of working.

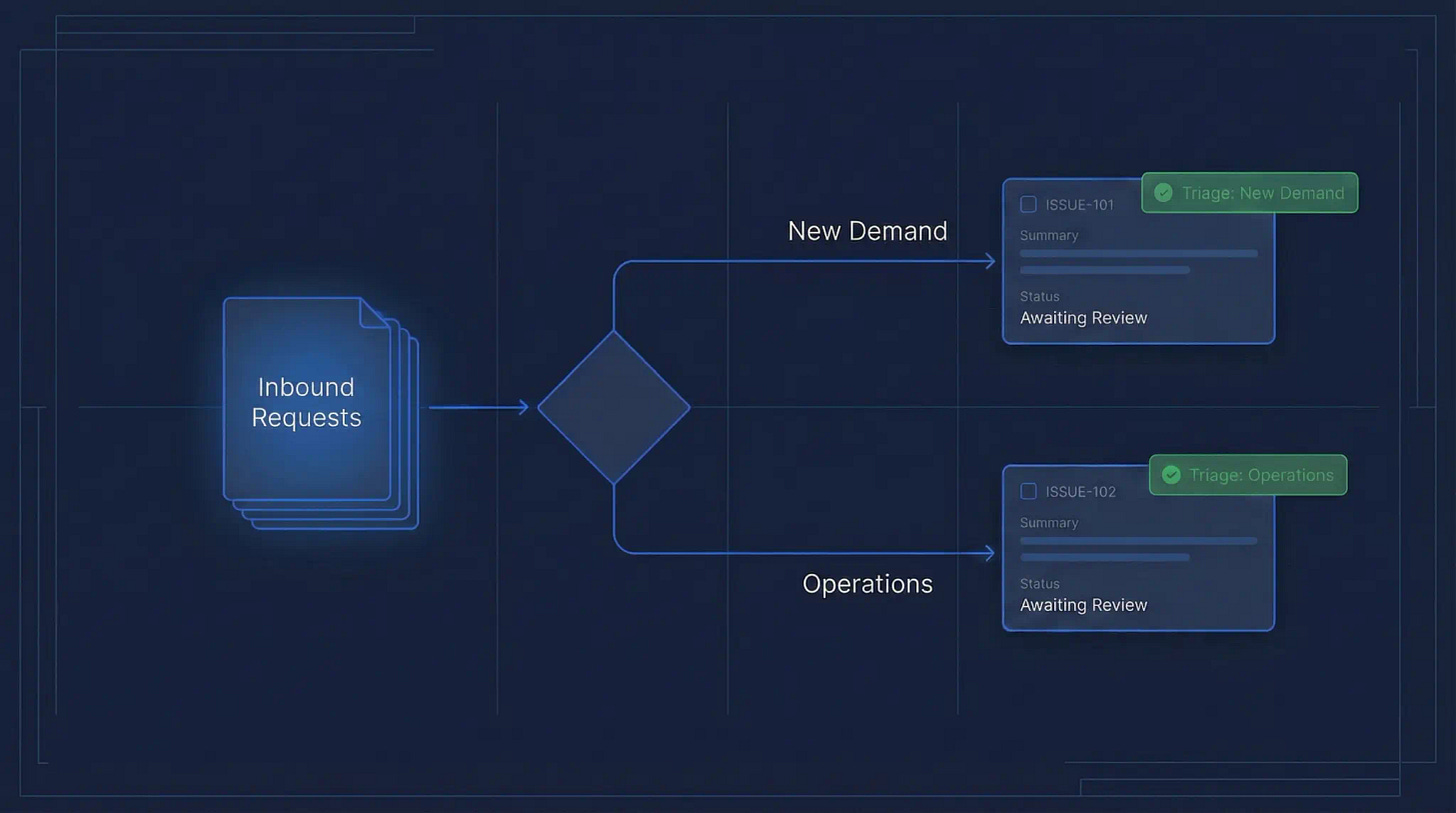

Visual overview of the AI triage workflow and the two routing outcomes: new demand and operations.

I built an AI triage agent inside a regulated UK bank using Claude Code. It reads incoming Jira tickets, classifies them as new platform demand or operational work, writes its reasoning back into the ticket, and labels everything for automated routing. It runs against a real backlog. People on the team will act on what it produces.

This piece is really a case study. Not just on the build itself, but on what it looks like to use AI to solve a real operations problem in a regulated environment.

For founders and operators, that’s the part that matters. The opportunity is not in building flashy demos. It’s in creating operational efficiencies inside the workflows that already run your business.

The useful lesson isn’t that the build worked. It’s what founders and operators can learn from using AI to improve a real operational process inside a live business environment.

The problem, and why it’s bigger than it looks

Before the methodology, the failures, or the architecture, the problem deserves more attention than most writeups give it. Without understanding why demand triage is genuinely hard, the build looks like a clever solution to a minor inconvenience. It isn’t.

Here’s what actually happens in most platform teams, central functions, and shared services at any organisation of meaningful size.

Demand arrives constantly. Some of it is operational, a broken service, a permissions issue, a configuration change on something that already exists. Fix it, close it, move on. Some of it is capability demand, a net-new workload, a new integration, a new environment that doesn’t exist yet. Design it, scope it, plan it, build it over weeks or months.

These are fundamentally different categories of work. They require different people, different processes, different timelines, and different conversations with leadership. Operations is about maintaining what exists. Capability is about building what doesn’t.

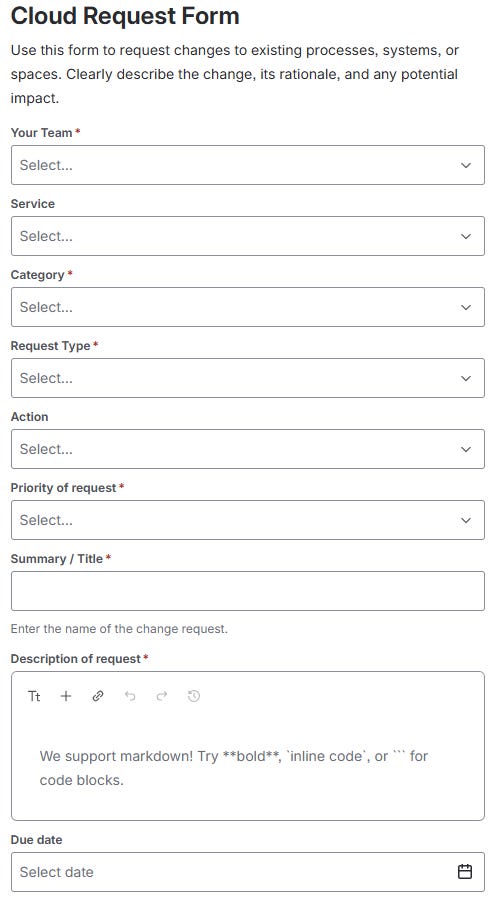

The problem is that they arrive through the same front door.

Every request, whether it takes a senior engineer three months to deliver or a junior engineer three hours, lands in the same Jira project. Someone has to read it and make a call. Is this keeping the lights on, or is this building something new?

That sounds like a simple question. It isn’t.

Operational work and strategic demand often arrive in exactly the same place, written in exactly the same language.

An access request for a new AWS account might be routine operations. Or it might be the first signal of a new workload that needs a full landing zone, a security review, and six months of platform design. A request to update firewall rules might be a two-line config change. Or it might be the start of a network architecture conversation nobody’s had yet. The summary rarely tells you which. The description is often missing entirely. The person who submitted it frequently doesn’t know the difference themselves.

So the decision falls to the people who do know, delivery managers, business analysts, senior architects. People whose time is expensive and whose judgement is built from years of platform context. They read the ticket, apply that judgement, and route it correctly.

Until they’re stretched. Until they’re on leave. Until three urgent things land at the same time and the backlog review gets pushed. Until the junior on the team picks up a ticket and makes a reasonable guess that turns out to be wrong — and a piece of strategic platform demand sits in the wrong queue for three weeks before anyone notices.

This is the failure mode. Not dramatic. Not a single incident. Just a slow, consistent bleed of misrouted work, missed priorities, and decisions that depended on the right person being available at exactly the right time.

At scale, across a portfolio of fifty or a hundred active tickets, this costs more than most organisations have ever tried to measure. Demand that should have been scoped six weeks ago. Operations work that consumed senior architect time. Strategic requests buried under routine noise.

The shared front door: a single intake form where operational requests and net-new platform demand arrive together.

The agent I built solves the classification problem. Every incoming ticket gets read, assessed against the platform’s actual context, active initiatives, existing patterns, routing rules, and classified before a human ever looks at it. The reasoning is attached directly to the ticket. The label is applied automatically. The humans who used to spend time making that call now spend their time on the cases the agent flags as genuinely ambiguous.

That’s a different way of working. And it’s repeatable across any team, in any sector, where demand arrives faster than the people responsible for routing it can keep up.

For founders, the same problem exists in your business. Support requests versus product feedback. Client issues versus new feature demand. Operational fixes versus strategic investment. If those categories are currently separated by whoever happens to read the email first, you have the same problem at a smaller scale. The solution is the same.

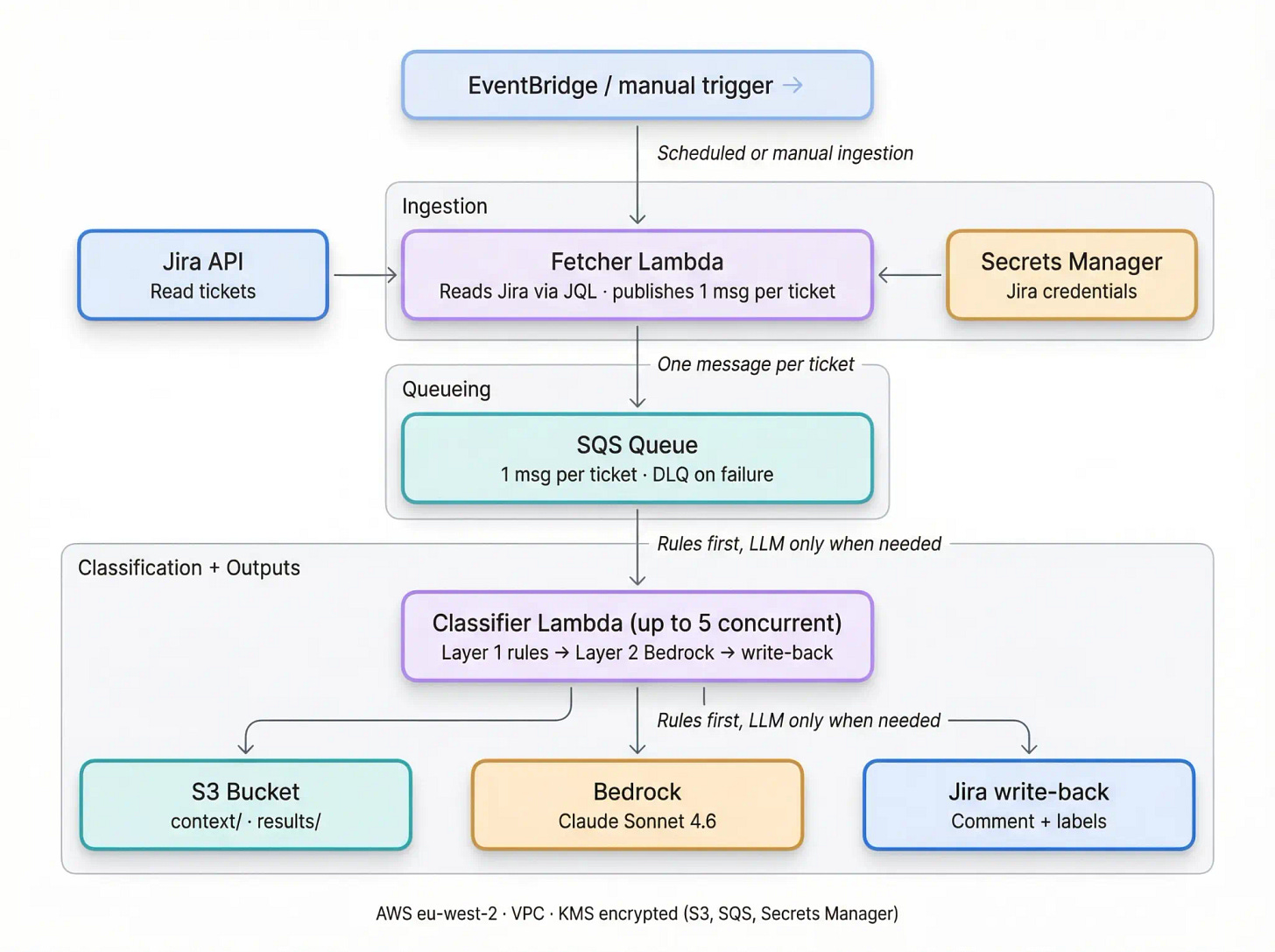

The operating architecture behind the build: ingestion, queueing, classification, Bedrock enrichment, storage, and Jira write-back.

The methodology before I wrote a single line of code

Most content about Claude Code covers short solo tasks. An afternoon project, a script, a quick component. The context window barely fills up, everything completes in one session, and the output is self-contained.

A build like this, even when done in under two weeks, is completely different. There’s existing context, decisions already made, work already in flight, conventions already established. And every Claude Code session starts cold. The model has no memory of what you did yesterday. You are the continuity. That’s the problem context management solves, and it’s the part nobody writes about because most people haven’t had to solve it yet.

Every session starts cold. You are the memory.

Here’s how I structured it.

The context files. Before starting the build I identified three documents that held the most relevant organisational context: a conventions file covering how the team works and what standards apply; an initiatives register covering active work, status, and dependencies; and a patterns catalogue listing reusable components already built. These files didn’t exist for AI, they existed because the team already had good documentation discipline. They became the foundation of every session. Loaded at the start, refreshed when they changed.

If your business doesn’t have these files, creating them is the first step, not the Claude Code build. The agent is only as useful as the context it has to work with. For founders, that means documenting how your operations actually run before you try to automate them. That process alone will surface problems you didn’t know you had.

The 50–60% rule. I kept the context window at around 50–60% capacity throughout the build. The reason is simple: a model reasoning over a full context window has less room to work than one with space to think. When the window got heavy, accumulated output, long debugging threads, generated files, I started a new session and reloaded the context files. It felt inefficient. It wasn’t. The outputs were more consistent, and the model made fewer errors on the second half of a task than on the first half of an overloaded one.

Planning mode before execution mode. Before writing any code I ran a dedicated planning session using the most capable model available. I gave it the full brief: the problem, the constraints, the desired output, the platform context. What came back in a single session was a complete planning artefact set, user story with acceptance criteria, BDD scenarios covering the main classification cases, and architecture decision records for the key technical choices.

That output shaped every decision that followed. The acceptance criteria became my failure conditions. The ADRs became the record I could show the team. The scenarios became the test cases I ran the agent against. Two hours in planning mode before touching a line of code saved at least two days of rework. I won’t skip this step again.

Two hours of planning saved at least two days of rework.

The session handoff file. At the end of every working session I updated a short document, what was built, what came next, decisions made, open questions. This is what let me pick up the build the following evening without losing the thread.

Thirteen years of delivery management taught me that the handoff is where projects lose momentum. A decision made on Tuesday gets lost by Thursday if it isn’t written down. The same is true for AI-assisted builds, except the stakes are higher because the model has no continuity at all. You are the continuity. Write it down.

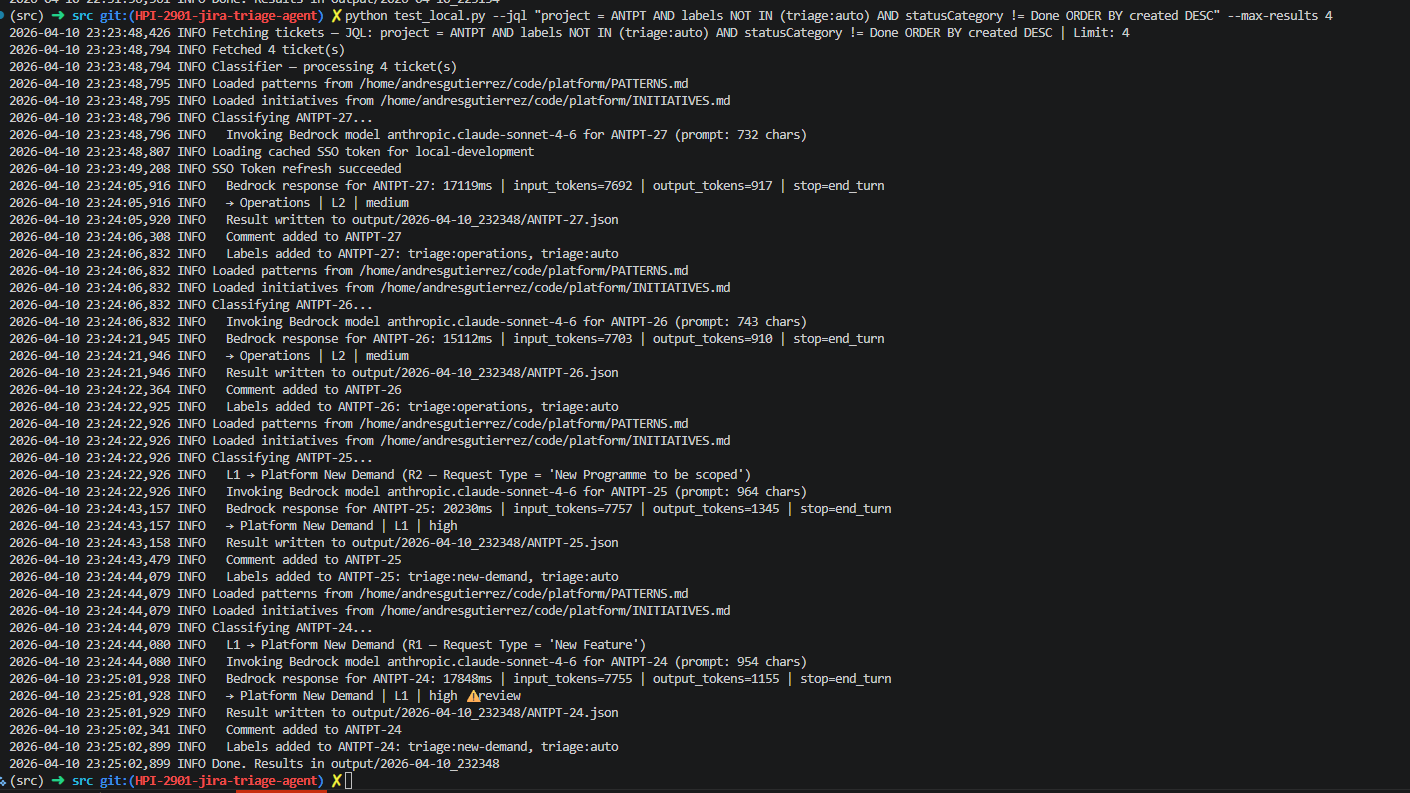

The agent running after the failures were fixed, Layer 1 firing deterministically, Bedrock being called for the ambiguous tickets, comments and labels written back to Jira. Getting here required fixing three separate problems first.

The failures

The first run didn’t work. Here’s exactly what happened.

Environment access took longer than expected. Before writing a single line of code I lost time to access issues — network permissions, credentials, tooling setup. In a regulated environment this is predictable. In any environment with real security controls, it’s possible.

Treat access setup as a project phase, not a prerequisite you can sort on the fly. Identify every system your agent will need to touch. Get the credentials and permissions sorted before you start building. Test connectivity before you assume it works. I didn’t, and it cost me a full day.

Treat access like part of the build, not something to sort out halfway through.

The data wasn’t in the format I expected. The first classification run failed immediately. Jira returns ticket descriptions as Atlassian Document Format — a deeply nested JSON structure, not readable text. The model was receiving something it couldn’t reason over. I had to build a recursive extractor to parse it and return clean text. Forty lines of code. Two hours to diagnose, twenty minutes to fix.

Real environments don’t give you clean inputs. Whatever your agent reads from — a CRM, a helpdesk, a form, assume the raw format will need processing before the model can use it. Build the extractor first, before the classification logic. Test it on real data before you test anything else.

The deterministic rules matched nothing. The fast filter I built — the layer that handled obvious cases without calling the AI, matched zero records on the first run. The rules checked for “New Feature.” The data said “new feature.” One character difference. I built a normaliser: strip punctuation, lowercase everything, handle common variations. Deterministic logic only works when you account for how humans actually enter data. They don’t use consistent casing. They abbreviate. They use different words for the same thing. Build the normaliser before you build the rules.

Humans do not enter data cleanly. Build for that first.

The architecture needed fixing mid-build. I had external dependencies threaded through the core logic in a way that would have caused problems in production. A more experienced engineer pointed me towards a cleaner pattern, separating the core classification logic from all external integrations so it could run identically in development and production.

I couldn’t have got there alone. What I had was enough of a relationship with the right person to ask the question, and enough technical understanding to know something was wrong. That’s the honest version of “ask for help”. It’s not about humility. It’s about knowing which gaps you have and closing them before you build yourself into a corner.

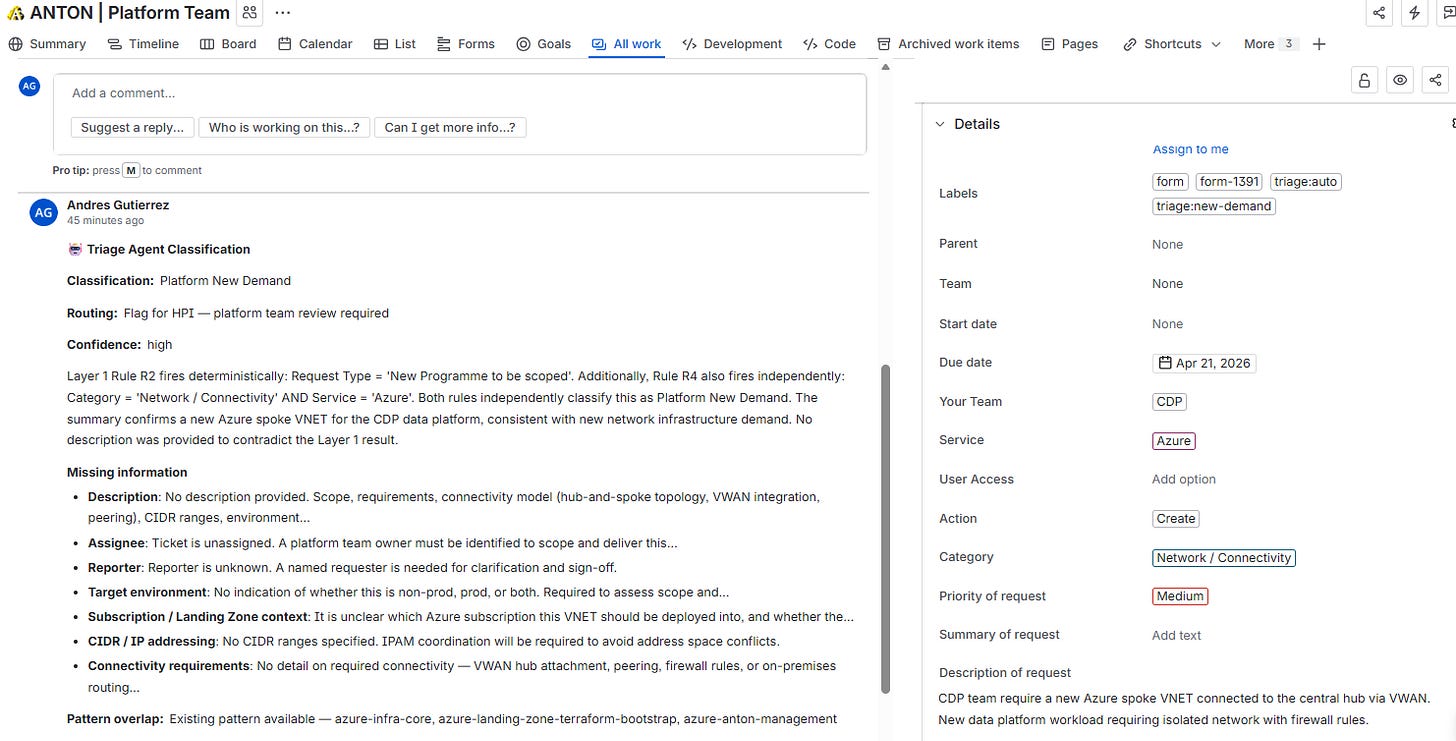

Layer 1 firing deterministically — two independent rules triggered, Bedrock enriched the result and identified an existing pattern in the catalogue as an overlap. This is what a correct new demand classification looks like in Jira.

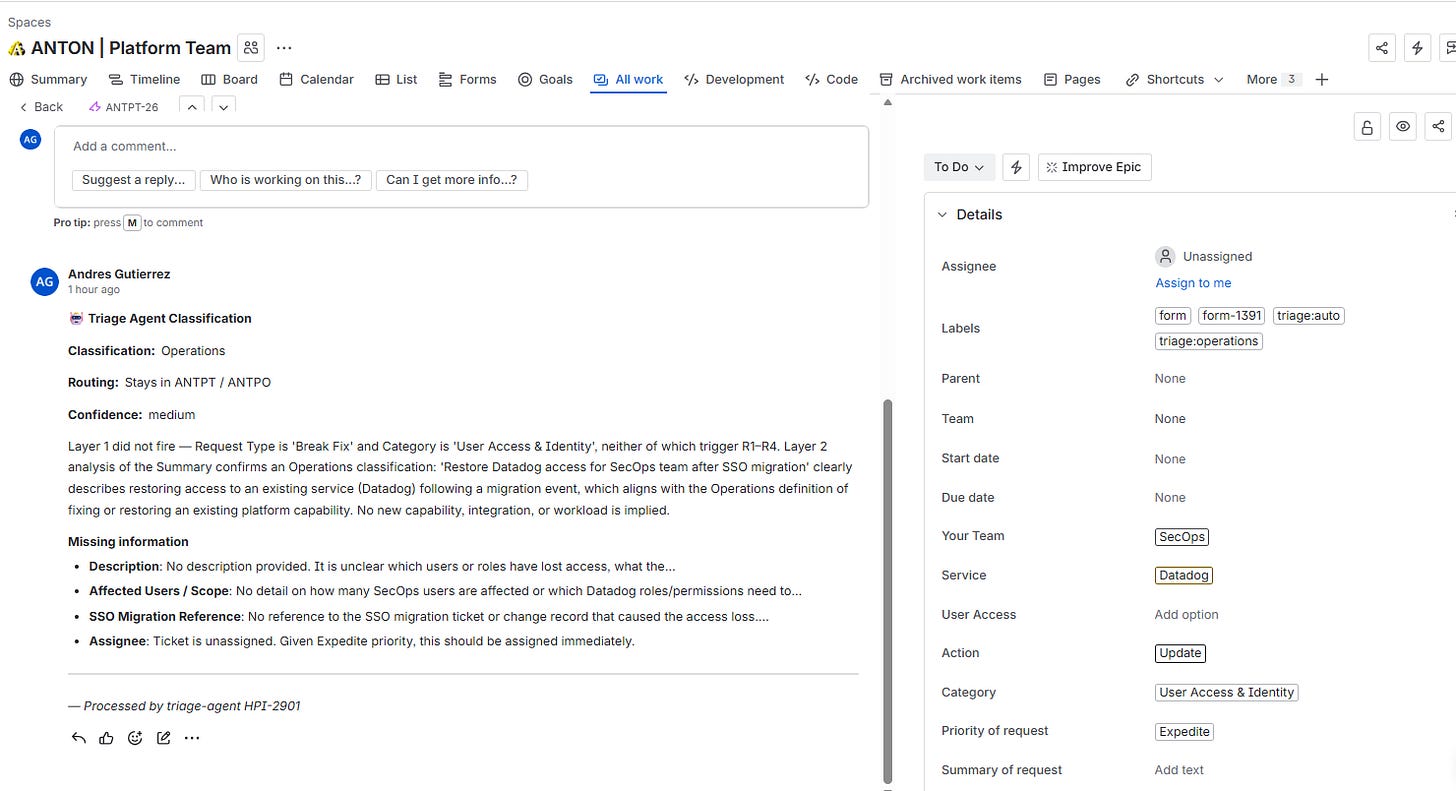

Layer 2 on an operations ticket — Layer 1 found no match, so Bedrock analysed the description against the platform context. Medium confidence, full reasoning attached, missing information flagged. The agent knows what it doesn’t know.

What the AI operator role actually requires

The AI operator role is a process role with technical capability built in. Not a technical role with some process knowledge bolted on. That distinction matters, and it’s why founders and operators are better positioned for this than most people currently claiming the title.

The AI operator role is a process role with technical capability built in.

But there are prerequisites nobody talks about honestly.

You need access to the actual problem. This build only happened because I had access to the environment, the data, and the people. I couldn’t have walked in cold and built it, the access came from relationships and trust built over two years before any of this started.

If you’re early in a role or a client relationship, your job right now isn’t to build AI tools. It’s to earn the access that makes building them possible. For founders, this is less of a barrier, you already have access to your own systems, your own data, your own team. That’s a genuine advantage. Use it.

You need at least one technical collaborator. I have enough technical understanding to design a system, read documentation, and know when something is wrong. I don’t have the vocabulary to know which architectural pattern to reach for when the structure needs fixing. Someone else did. Most content about AI operators assumes you’re working alone. The best builds I’ve seen involve at least one person who can catch what the operator misses. Find them before you start, not when you’re stuck.

You need to document what breaks. I wrote up the first run failing. I published it. Knowing I was going to write about what broke made me pay closer attention to why it broke, which is the only reason I know exactly what went wrong. If you’re not willing to document the failures alongside the wins, you’ll find reasons not to start. And you’ll learn less when you do.

You need to already understand the problem. I knew which problem was worth solving before I opened Claude Code. I knew what the output needed to look like. I knew the failure conditions. That knowledge came from years of working closely with operations teams and understanding how work actually flows. The tools are accessible. The understanding of which problem to solve, and what good looks like, is harder to acquire. That’s where your advantage sits, and it’s not something Claude Code can give you.

What I’d do differently

Sort access and credentials before the first session. This should be a completed prerequisite, not something you discover is broken on day one. List every system your agent touches. Get confirmation that each one is accessible from your build environment before you write anything.

Build a test environment and dry-run mode from the start. Run every early test against dummy data. Build the ability to classify without writing back to the real system before you run against anything real. I got away without this because I was working read-only. That was luck, not good practice. If your agent writes back to anything, a CRM, a helpdesk, a database, protect yourself from day one.

Trim the context passed to the model. I was loading the full documentation catalogue on every classification call. That’s expensive and slow. Pass only the slices relevant to the ticket. The more precisely you retrieve context, the cheaper and faster the system becomes.

Record every session from the start. The notes from the second week of this build were invaluable for writing this piece. The first week is largely gone. Start a running log on day one, even if it’s rough. You’ll use it.

If you want to build with AI over days, you need a handoff system. If you want to build over weeks, you need it even more.

The honest version of the opportunity

Building this gave me a glimpse of what operations could look like in the next few years. Not a prediction, a glimpse.

The core of good operations doesn’t change. Understanding how work flows, where it gets stuck, how to design a better state, and how to get people to trust it, that’s still the job. What changes is the execution layer. The tools available to act on that understanding just got significantly more powerful.

The founders and operators who identify the right problems, build the access and relationships to solve them, find their technical collaborators, and get their hands dirty. they’re the ones who will drive real efficiencies in their businesses.

That’s not a prediction about who survives. It’s an observation about what becomes possible.

I’m building more of these. I’ll keep sharing what I learn.